Mythos Detects a New Ceiling: Security Teams Must Adopt a New Playbook

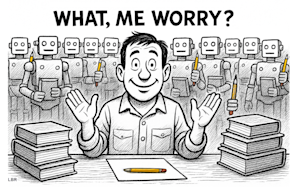

When Mythos first surfaced the 27‑year old OpenBSD TCP bug, it did so without human guidance after the initial prompt. The flaw lived inside a hardened stack and could crash any server with two crafted packets. Auditors had chased the issue for decades as fuzzers ran and code reviews happened in parallel, yet Mythos managed to reveal the vulnerability autonomously. The speed and scale of the discovery mark a non‑incremental leap in AI‑assisted security, not merely a refinement of human work. Anthropic formed Project Glasswing, a defensive coalition that includes big names in industry and philanthropy, backed by substantial usage credits and open‑source grants. A public findings report is planned within 90 days, but security directors have been left with a pressing question: what constitutes a usable playbook when the detection ceiling is being redefined by machines?

Across major operating systems, browsers, and cryptographic libraries, Mythos surfaced thousands of zero‑day vulnerabilities, many of them decades old. The model demonstrated a capacity to reason about code semantics and system interactions in ways that traditional tools often miss. The scale of the campaign and the speed at which exploits can be assembled—from discovery to working exploit overnight—has already begun reshaping boardroom conversations about risk, patching, and how to measure protection in a world where AI can chain multiple flaws into a single, autonomous attack path. The Glasswing partners have been running Mythos against their own infrastructure for weeks, underscoring a shift from detection to proactive defense.

Security leaders quickly mapped Mythos’s findings against seven vulnerability classes where existing detection methods hit a ceiling. These include the long‑lived OpenBSD TCP SACK flaw, FFmpeg’s H.264 path, the FreeBSD NFS remote code execution, Linux kernel local privilege escalation, browser crypto‑ and rendering bugs, highly sensitive cryptography library weaknesses, and guest‑to‑host memory corruption in production VMMs. Each class illustrates a gap where static scanning, fuzzing, or human pen tests struggle to reason about complex interactions, sequencing, and lifecycle across components. For some of these flaws, the cost of a full campaign was modest—thousands of dollars—while the impact could be broad and immediate, forcing patch cycles across vast software ecosystems.

Industry voices and regulatory watchers framed the rapid capability shift in urgent terms. Executives from Cisco note a velocity that is both exhilarating and terrifying: defenders must move faster, while adversaries armed with AI can churn patches and evasion techniques at unprecedented speed. The EU AI Act’s enforcement phase looms on August 2, 2026, bringing automated audit trails, mandatory cybersecurity requirements for high‑risk AI systems, and penalties up to 3% of global revenue. In the same breath, field CISOs warn that patch rhythms—often annual or semi‑annual in practice—will no longer suffice in a landscape where patches can be reverse‑engineered and weaponized within 72 hours. Analysts point to a two‑wave reality: Glasswing disclosures this summer followed by a broad patch tsunami in July and August.

To translate this into action, the narrative around Mythos has crystallized into three shifts security programs must embrace. First, move from severity scoring to exploitability pathways that map how multiple flaws can chain together to form an attack. Second, replace static vulnerability lists with vulnerability graphs that model relationships across identity, data flow, and permissions. And third, reframe remediation SLAs around path disruption—prioritizing fixes that break the chain even if individual flaws score modestly on CVSS. In practice, the boardroom language changes: you are not patching the highest CVSS score alone; you are patching the weakest links that enable multi‑stage exploits. Industry leaders advocate for AI‑assisted chainability scoring and a stronger push to integrate Glasswing findings before July into vendor patch cycles and RFPs.

Beyond Mythos, the broader AI security race is intensifying. AISLE, an AI cybersecurity startup, tested Anthropic’s vulnerabilities on small open‑weights models and found that even models with billions of parameters could reproduce the chain of analysis needed to expose the same flaws. The lesson is stark: the moat in AI cybersecurity is not the model size but how the system orchestrates detection, reasoning, and action. As OpenAI shelves Stargate UK and Meta unveils Muse Spark, the competitive pressure to translate AI breakthroughs into tangible protection accelerates. The coming months are framed as a patch tsunami rather than a single disclosure event, and security teams that expand patch pipelines, re‑scope bug bounty programs, and adopt chainability scoring will stand the best chance of staying ahead.

Sources

- https://venturebeat.com/security/mythos-detection-ceiling-security-teams-new-playbook

- https://www.theguardian.com/technology/2026/apr/09/openai-pulls-out-of-landmark-31bn-uk-investment

- https://www.theguardian.com/technology/2026/apr/09/meta-first-ai-model-muse-sparks

- https://aibusiness.com/generative-ai/meta-coreweave-21b-deal-expand-ai-partnership

- https://aibusiness.com/agentic-ai/new-anthropic-tool-speeds-ai-agent-development-enterprises

Related posts

-

AI News Roundup: Deepfake Doctors, IP Battles, and the Emergence of an AI Powered World

As AI becomes embedded in everyday life and business strategy, a weave of headlines from top outlets reveals...

5 December 2025154LikesBy Amir Najafi -

AI News Roundup: Microsoft Partnerships, On-Device AI, and Real-World Impacts

Today’s AI news reads like a map of where the technology is headed: collaborations that push enterprise efficiency,...

24 November 2025131LikesBy Amir Najafi -

AI News Roundup: Law, Policy and Ethics Shaping AI’s Future

Today’s AI headlines weave a single thread: accountability. From a Labour MP taking Elon Musk’s xAI to court...

3 June 202616LikesBy Amir Najafi